How user needs shape government services

What changes across these stages is the understanding of the problem itself. Needs that seemed clear at the beginning can shift once they’re tested against real systems, real policies, and real user behaviour. Something that looked like a usability issue might turn out to be a policy constraint. A need that appeared to affect a small group might become more significant at scale. As more evidence comes in, teams often revisit earlier assumptions, reshape needs, and adjust priorities.

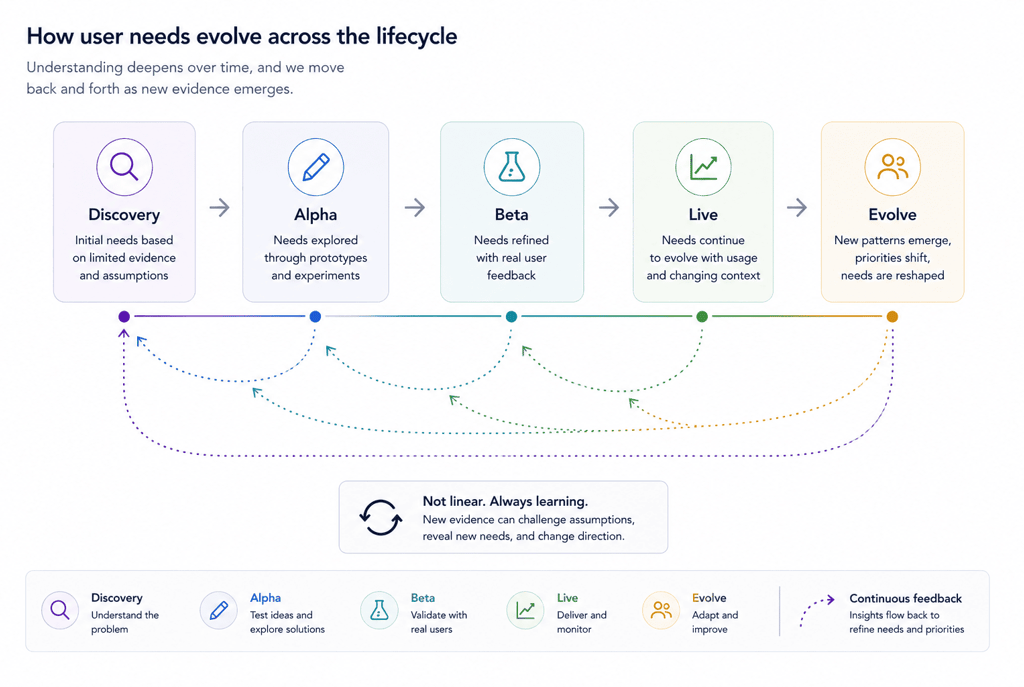

This doesn’t follow a clean, linear sequence. Teams move back and forth between understanding and delivery, responding to new information as it appears. Work done in Beta can trigger new Discovery, and insights from Live can reshape earlier decisions. This overlap reflects how services actually evolve in practice, and sits within the broader picture when you have a deeper look at what service design in government entails, with lifecycle stages acting more as a reference point than a true representation of how work unfolds day to day.

Where user needs lose their influence

Even when user needs are well defined, they don’t always shape outcomes. They can be overridden by stakeholder preferences, especially when decisions are driven by internal priorities or external pressures. They can also be simplified into high-level statements that are easy to agree with but difficult to act on, losing the specificity that made them useful in the first place. Under delivery pressure, they’re often pushed aside altogether, as teams focus on meeting deadlines rather than fully understanding the problem.

Over time, this changes their role. User needs remain visible in documents, presentations, and artefacts, but become less active in decision-making. The service continues to evolve, but not always in ways that reflect how people actually use it. Decisions are made, changes are implemented, but the connection back to real behaviour becomes weaker. That drift is rarely intentional, it’s usually a result of competing pressures and shifting priorities.

This pattern doesn’t sit in isolation. It connects to the wider environment services are built in, when dealing with the challenges of designing services in the public sector, as structural constraints, delivery pressure, and organisational dynamics all shape what gets prioritised and what gets left behind.

What it looks like when it works

When user needs are used well, they stop being something the team produced earlier and instead become part of everyday thinking, influencing conversations, decisions, and priorities. Instead of asking “what can we deliver,” teams start asking “what problem are we solving, and for who?” This shift changes how trade-offs are made and what gets attention.

They also create a shared reference point across disciplines. Service designers, user researchers, product managers, and developers can align around the same understanding of what matters, even if they approach it from different angles. When decisions are challenged, they’re grounded in evidence rather than opinion. When priorities shift, there’s a clearer way to assess impact. Over time, needs are revisited, refined, and tested against real usage, staying connected to how the service behaves rather than becoming static artefacts.

When this is working well, it tends to show up in consistent ways:

Decisions are explained in terms of user impact, not just delivery constraints

Teams refer back to specific needs during discussions, not just at the start of a project

Prioritisation is driven by what changes outcomes, not what is easiest to implement

Trade-offs are made explicit, with a clear understanding of who is affected

User needs evolve over time as new evidence emerges, rather than staying fixed

Bringing it back to decisions

User needs only matter if they influence what gets built, changed, or removed. Without them, services tend to reflect internal structures. Decisions are shaped by what is easiest to deliver or justify, instead of what works for users and improvements focus on individual parts rather than how the service functions as a whole.

With them, decisions are easier to understand because they are grounded in evidence. The impact of changes can be traced more directly, feeding into how success is measured across the service as part of understanding outcomes more holistically. User needs don’t simplify the work, but they make it more visible by connecting evidence to action and action to outcomes, in a way that holds up even in complex environments.

User needs are often talked about as if its a straightforward and easy process. Start with users, design around needs, follow the evidence. On the surface, it suggests a clear and logical starting point for improving services. It implies that if you understand what people need, the rest of the work naturally follows. That framing is appealing because it simplifies something that is actually much more complex.

However, it’s rarely that clean. User needs in government are built from incomplete, sometimes conflicting signals about how people interact with services. They come from different sources, reflect different perspectives, and often change as more is learned. They don’t arrive fully formed, and they don’t stay stable. As services evolve and real-world conditions shift, so do the needs themselves, which means teams are constantly interpreting, refining, and reapplying them rather than treating them as fixed inputs.

What “user needs” actually mean

User needs are often misunderstood because they get reduced to artefacts. They’re not personas, assumptions, or stakeholder opinions dressed up as insight. They are clear, testable statements grounded in evidence which describe what someone is trying to do, the situation they’re in, and the barriers that get in their way.

A useful user need is specific enough to act on. For example, instead of saying “users need clear information,” a stronger version might be: users need to know what happens after they submit their application so they’re not left uncertain about next steps or timelines. That level of clarity gives teams something concrete to design and test against. Without it, user needs stay at the level of general principles, easy to agree with, but difficult to apply in real decisions.

Where user needs come from

User needs don’t come from a single source and they’re rarely complete when first identified. They’re built by piecing together different types of evidence, each showing a slightly different view of the service. User research is one input. Interviews, observations, and usability testing reveal how people approach a service, what they expect, and where they struggle. But that only gives you a partial picture. People don’t always describe their behaviour accurately, and research tends to capture a moment in time rather than how things change across different situations or over longer periods.

Operational data adds another layer. Call logs, complaints, and drop-off points show where services break at scale, often highlighting patterns that don’t appear in smaller research samples. Frontline staff bring a different kind of insight again. Caseworkers and support teams see how rules are interpreted in practice, where people get stuck, and how workarounds emerge under pressure. Bringing these sources together is what makes user needs more reliable. That evidence is rarely held in one place, and starts to make more sense when you look at how government services are designed behind the scenes.

Turning research into usable needs

Research produces observations, transcripts, and patterns, but these don’t automatically translate into something that can guide decisions. Teams have to interpret what matters, identify what repeats, and decide what has the most impact. That process involves judgement and analysis.

There’s a tendency to over-generalise. Specific insights get turned into vague statements that lose their usefulness. At the same time, there’s a risk of oversimplifying complex situations just to make them easier to communicate. Both approaches weaken the connection between evidence and decision-making.

Prioritisation is where this becomes practical. Not all needs carry the same weight, and treating them equally usually leads to shallow decisions. Some needs show up frequently but are relatively easy to resolve. Others are less common but have a disproportionate impact, blocking people from completing a service altogether or forcing them into workarounds. There are also needs that sit in between, not immediately visible, but compounding over time through repeated friction or confusion.

Prioritisation is not just a data exercise. Frequency alone doesn’t tell you what matters most, and neither does severity in isolation. Teams have to consider how needs interact with each other, how they affect different groups of users, and what the downstream consequences are if they’re not addressed. A need that causes a small delay might seem minor, until you see it driving repeat contact or increasing pressure on operational teams. Another might only affect a smaller group, but if that group is already in a vulnerable situation, the impact is much greater.

This is why context is critical. Prioritisation involves judgement about what will make the biggest difference to how the service works overall. It also involves being explicit about trade-offs, because focusing on one need often means delaying or deprioritising another. In practice, good prioritisation is less about ranking a list and more about understanding which needs, if addressed, will meaningfully change outcomes for both users and the service itself.

How user needs influence design decisions

User needs, when applied correctly, affect the order of steps, the way information is presented, and how users move between channels. They help teams decide what to include, what to remove, and what to simplify. More importantly, they provide a way to navigate trade-offs. When teams are deciding between competing priorities, user needs offer a reference point. They help answer questions like:

Does this change actually help someone complete the task?

Does it reduce confusion, or move it somewhere else?

This is how they become part of day-to-day work. This dynamic shows up in how service design works in government teams, with decisions are shaped by multiple constraints and perspectives rather than a single, linear process.

Navigating tension with policy and constraints

User needs don’t sit above policy, systems, or operational limits. They sit alongside them. As a result, there are many situations where user needs and constraints don’t align. A user might need flexibility, but eligibility rules may be fixed. A process might need to be shorter, but verification requirements may prevent that. A clearer explanation might still need to use language defined by legislation.

In such moments, user needs don’t automatically “win.” Instead, they make the impact of decisions visible, showing where friction exists, who is affected, and what the consequences are. This shifts the conversation from abstract rules to real outcomes. This tension sits within a broader pattern explored in the gap between service design and policy design in government, where the alignment between intent and experience is continually worked through, and doesn’t always hold.

Designing beyond the “happy path”

Services are often designed around a standard journey. This journey reflects an ideal version of how a service should work. However, real usage is different. People pause, restart, switch channels, and deal with changing circumstances. They might not have all the information required or they might misunderstand a question or struggle to provide evidence. These situations are not exceptions, they are part of normal service use.

Designing for this reality means building in support for recovery. Allowing people to save progress, return later, or take alternative routes. It also means recognising that a service that only works under ideal conditions is incomplete. This becomes more visible when designing for vulnerable and hard-to-reach users. For such groups, the gap between expected and actual behaviour is often the widest, having profound impact.

Using user needs across the lifecycle

User needs are not fixed at the start of a project. While they’re often identified early, the first version is usually incomplete and shaped by limited evidence. During Discovery, teams begin to understand what matters, but they’re often working with partial insight and assumptions that haven’t been fully tested. As work moves into Alpha, these needs are explored through prototypes and experiments, which start to reveal what holds up and what doesn’t. By the time a service reaches Beta, needs are refined further based on real usage, and in Live, they continue to evolve as new patterns and behaviours emerge.

Let's chat about your next design project.

Phone

kolawale.design@gmail.com

07826 451774

© 2025. All rights reserved.

Social