Challenges of designing services in the public sector

That has a direct impact on how services behave. There’s rarely a single owner of the full experience. Responsibility is spread across teams, departments, and suppliers, each managing a different part of the journey. From within those boundaries, things can appear to work reasonably well but when those parts are connected, the gaps become visible. The service starts to feel fragmented because no one is shaping how it works as a whole.

Unlike the private sector, users don’t get to opt out of that complexity. If someone needs to apply for a resolve a tax issue, or access healthcare, they have to use the service that exists. That raises the stakes as failure isn’t just inconvenient, it can affect someone’s finances, wellbeing, or access to support. None of this comes down to poor practice or lack of effort. It’s structural, and understanding that context is central to what service design actually means in the public sector.

The policy vs user needs tension

One of the most consistent challenges in public sector service design sits between policy and user needs. Policy operates at a level of intent that assumes that rules can be applied clearly and consistently, and that people will understand and follow them as designed. User research, on the other hand, shows how those rules actually play out. It reveals where people struggle to interpret requirements, where instructions don’t match real situations, and where seemingly straightforward processes become difficult to complete in practice.

Service designers sit in the middle of that gap. They can’t ignore policy but they also can’t ignore the consequences when services don’t work for the people using them. That creates constant trade-offs. This tension doesn’t get resolved once and move on, it shows up repeatedly as services evolve. You see it most clearly when comparing the gap between policy intent and service reality.

Fragmented ownership across departments

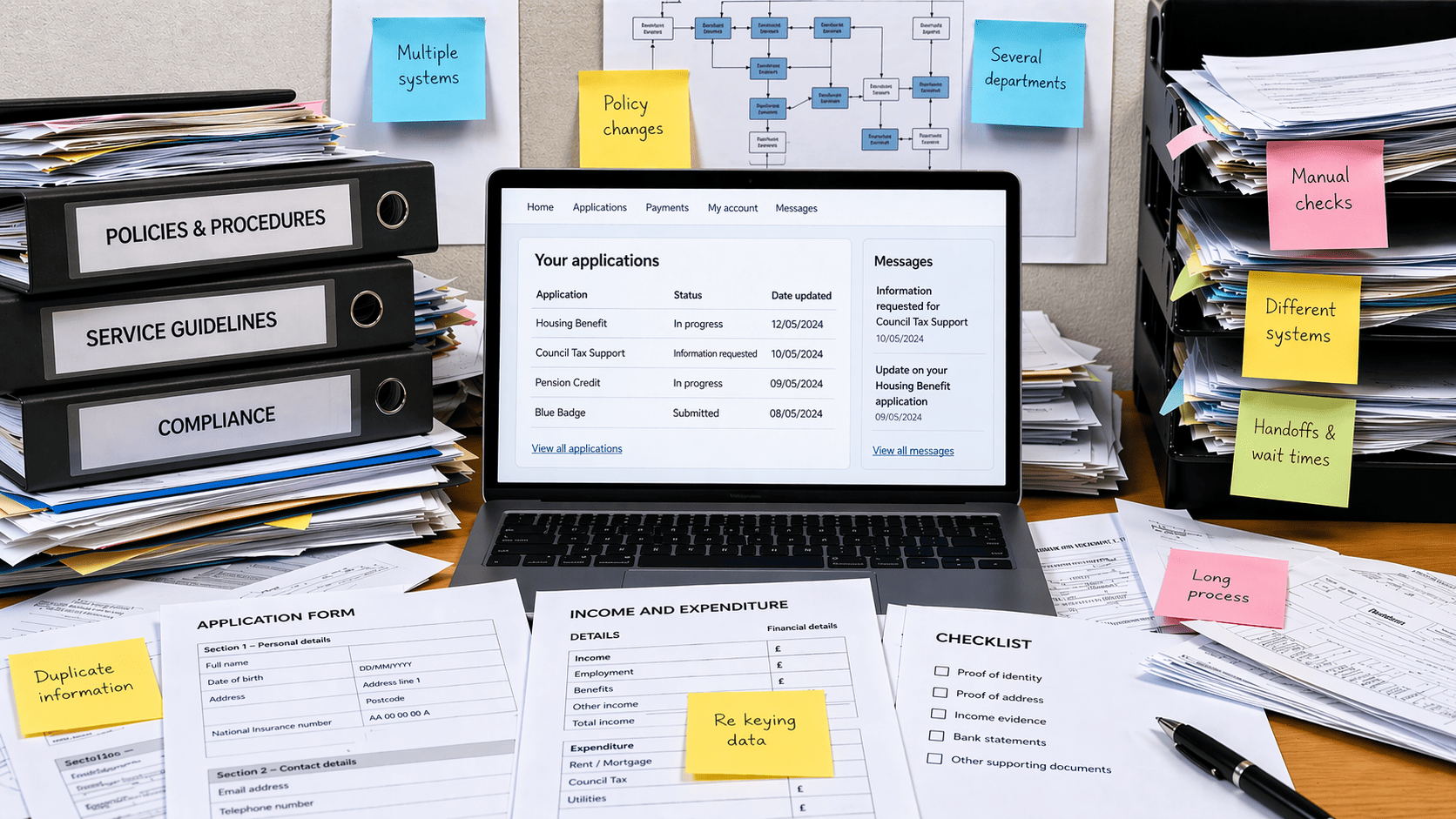

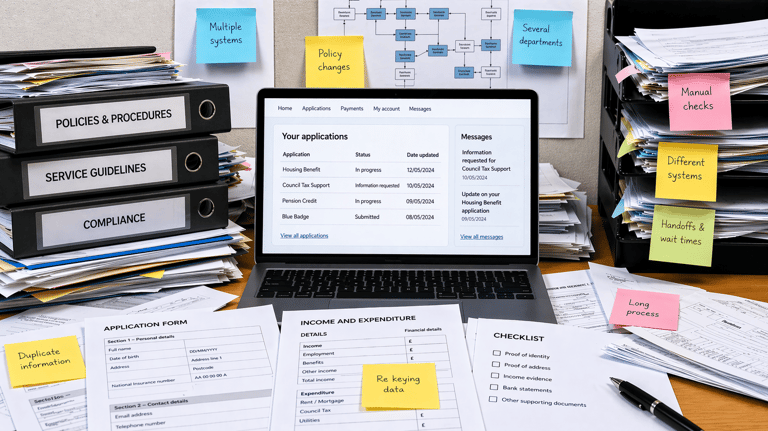

Services stretch across departments, agencies, operational units, and external suppliers, each responsible for a different part of the overall experience. Every part operates with its own priorities, targets, and constraints, which makes sense internally. Teams are set up to manage their piece of the work, and from that perspective, things can feel under control. But this view only reflects how the organisation is structured, not how the service is experienced.

From the user’s perspective, they move through the service as a single journey, while the organisation delivers it as a series of separate responsibilities. That’s where friction starts to appear. Working within the service, you may hear things like “that’s not our part of the service” or “we only handle this stage,” and while those statements are often accurate, they reveal the underlying issue. Local optimisation can make the overall service worse. One team improves efficiency by shifting effort elsewhere. Another reduces workload but creates confusion downstream. These effects are hard to see without stepping back, which is why mapping complex government services across departments often reveals just how disconnected the service really is.

Legacy systems and technical constraints

Many government services depend on systems that were built years ago. These systems are often deeply embedded and also difficult to change. They don’t integrate easily with newer platforms, and they shape how data is collected, structured, and processed across the service. Because they underpin critical operations, replacing them is expensive, risky, and slow. This means that teams don’t normally remove them, they work around them.

These workarounds start small but tend to accumulate over time. Symptoms that arise form this include:

Information getting collected in ways that don’t match how users understand their situation

The same data being requested multiple times because systems can’t share it

New journeys layered on top of old infrastructure rather than replacing it.

Gradually, these adjustments become part of the service itself, creating a constant trade-off between designing the “right” service and designing something that can actually be delivered. Most teams land somewhere in between, which is why incremental improvement becomes the default, even when a more fundamental redesign would make more sense but is far harder to achieve.

Risk aversion and governance

Government services operate under a high level of scrutiny. Decisions are shaped by legal requirements, financial controls, reputational risk, and standards around accessibility and security. These aren’t optional considerations, they’re fundamental to how public services are delivered. As a result, every change needs to be defensible. This creates an environment where decisions are rarely made in isolation and often need to account for multiple layers of oversight.

This scrutiny leads to governance processes such as approvals, service assessments, and assurance checkpoints. These exist for good reasons, but they also shape how work moves forward. They can slow down change, limit how quickly teams can test ideas, and push decisions toward safer, more predictable options. This creates a constant trade-off between moving quickly and managing risk, between experimenting and maintaining certainty.

Delivery pressure vs good design practice

Most teams are working to deadlines that are often driven by policy commitments, funding cycles, or programme timelines, rather than the natural pace of good design work. There’s usually an expectation to “deliver something” within a fixed window, regardless of how well the problem is understood. This pressure shapes how teams prioritise their time and effort from the start.

Under these conditions, certain things get reduced or removed. User research becomes lighter, iteration cycles get shorter, and there’s less space to step back and understand how the full service fits together. Teams focus on what can be delivered within the time available, which often means improving individual touchpoints rather than addressing deeper structural issues. Decisions end up being made with partial information because they don’t have the time to gather it fully.

This tension is hard to avoid. Short-term delivery sits against long-term service quality, and shipping something tangible often takes priority over improving how the whole service works. This is where the gap between theory and practice becomes most visible.

Designing for vulnerable and complex users

Many public services are used by people dealing with difficult situations. This might include financial pressures, ongoing health issues, major life changes, or limited access to digital tools and support. These aren’t edge cases, they’re common contexts in which people interact with the government. The way a service is designed needs to account for that reality, not assume a level of stability or clarity that many users simply don’t have.

Standard “happy path” design starts to break down in these conditions. Processes often assume people have all the information they need, can complete tasks in one go, and fully understand what’s being asked of them. When those assumptions don’t hold, the service becomes harder to navigate. Small points of friction like poorly timed requests for example can quickly become blockers that prevent people from continuing at all.

This creates a set of trade-offs that teams have to work through. Designing for efficiency may exclude those with more complex needs, while designing for inclusivity can introduce additional complexity into the service. Scalable solutions don’t always account for individual circumstances, but tailored support is harder to deliver consistently. If a service only works for straightforward cases, it fails a significant portion of its users. That’s why how user needs shape government services becomes critical in practice, and why designing services for vulnerable and hard-to-reach users is less about edge cases and more about whether the service works under real conditions.

Measuring success is messy

A single service often spans multiple systems, teams, and channels, each capturing different parts of the experience. Data is spread across platforms, collected in different formats, and owned by different parts of the organisation. That makes it difficult to build a complete view of what’s actually happening from end to end.

On top of that, outcomes are rarely shaped by one factor alone. Policy changes, operational decisions, and external conditions all influence how a service performs. Metrics are usually tied to specific parts of the service, not the whole. One team might focus on completion rates, another on call volumes, and another on processing time. Each measure is valid in isolation, but they don’t always connect in a way that explains the overall experience.

This creates a kind of ambiguity that teams have to work through. It becomes harder to see the full picture, harder to connect changes to outcomes, and harder to know whether the service is genuinely improving. Without this connection, measurement can turn into reporting rather than learning. That’s why measuring success in public sector service design is less about tracking performance and more about understanding impact across the service.

The emotional reality of the work

Service design in government involves constant negotiation, balancing competing priorities, and making decisions with incomplete information. You’re often working across teams that see the problem differently, each with their own constraints and pressures. Progress is rarely linear. Work moves forward, then pauses, then shifts direction as new information, priorities, or constraints emerge.

That can be frustrating, especially when good work doesn’t always translate directly into change. Ideas may be adapted, delayed, or reshaped as they move through policy, delivery, and governance layers. The impact is often spread across the service rather than visible in a single outcome, which makes it harder to explain and sometimes harder to defend. But this is the reality of the work. It’s not about applying a clean process and seeing immediate results, it’s about working within a complex system, influencing decisions over time, and gradually improving how the service holds together.

Conclusion

The challenges of designing services in the public sector come from the nature of the environment itself:

complex systems that have evolved over time

distributed ownership across teams and organisations

policy constraints that shape what’s possible

and high stakes that make failure more serious

These factors don’t sit at the edges of the work, they define it. Understanding that doesn’t remove the difficulty, but it does change how you approach it. Instead of waiting for ideal conditions, you work with what exists. Instead of expecting clean, end-to-end solutions, you navigate trade-offs and partial improvements. And instead of focusing on isolated changes, you look for ways to make the service hold together more effectively over time, even within the constraints that are unlikely to go away.

Service design in government is often described in terms of principles like user needs, end-to-end thinking, joined-up services. The reality feels very different. Most of the work isn’t about applying best practice, it’s about working through constraints that don’t go away, navigating trade-offs without clean answers, and trying to improve services that were never designed as a whole in the first place.

Why public sector service design is inherently difficult

In the public sector, you're stepping into services shaped by existing policy, legacy systems, and organisational structures that have built up over time. Many of these services weren’t designed end-to-end, they’ve evolved in response to changing priorities, funding decisions, and operational needs. New layers get added to meet immediate requirements, while older ones rarely get removed, which means complexity accumulates rather than disappears.

Let's chat about your next design project.

Phone

kolawale.design@gmail.com

07826 451774

© 2025. All rights reserved.

Social