A problem framing framework for challenging assumptions and driving better decisions

Kola Wale

5/4/20267 min read

Many teams struggle because they’re not solving the same problem. This often starts with a rush to solutions, especially under pressure to deliver, where direction feels more valuable than understanding. At the same time, different roles interpret the issue through their own lens. Policy sees intent, operations sees constraints, and design sees experience. At a high level it looks like alignment, but once you zoom in, these interpretations don’t fully match. What ties them together are hidden assumptions that rarely get surfaced or challenged, resulting in activities without clarity and progress that feels productive but isn’t always pointing in the right direction.

The role of framing in hypothesis-driven service design

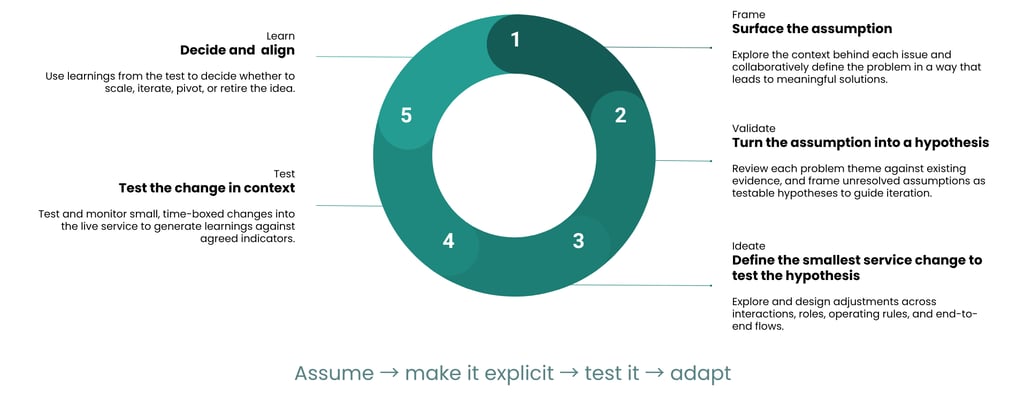

Framing sits within a wider hypothesis driven iterative service design approach, where teams move from assumptions to evidence through cycles of validation, testing, and learning. Before anything can be tested, improved, or delivered, teams need to be aligned on what they’re trying to understand. Without that, hypotheses are built on a shaky foundation and testing becomes disconnected from the real problem.

Framing provides the structure to bring policy intent, operational reality, and user need into one shared view.

It anchors the rest of the loop by making assumptions visible and turning them into something that can be worked with. If the broader approach is about moving from assumption to evidence then framing is the point where that shift starts taking form.

What good framing needs to achieve

Good framing is about creating clarity where there is ambiguity. It brings different perspectives into one shared understanding and makes uncertainty visible in a way that can be acted on. When done well, it gives teams direction by:

Aligning multiple disciplines: Framing creates a shared understanding across policy, operations, design, delivery, and analysts. It reduces misalignment by ensuring everyone is working from the same definition of the problem.

Surfacing assumptions explicitly: Instead of leaving assumptions buried in conversations or decisions, framing makes them visible by creating a space to question and test them.

Connecting evidence to decision making: It links what is already known with what still needs to be understood which helps teams avoid starting from scratch or duplicating effort.

Creating momentum: Good framing leads directly to action. It produces outputs that inform hypotheses, guide testing, and shape next steps.

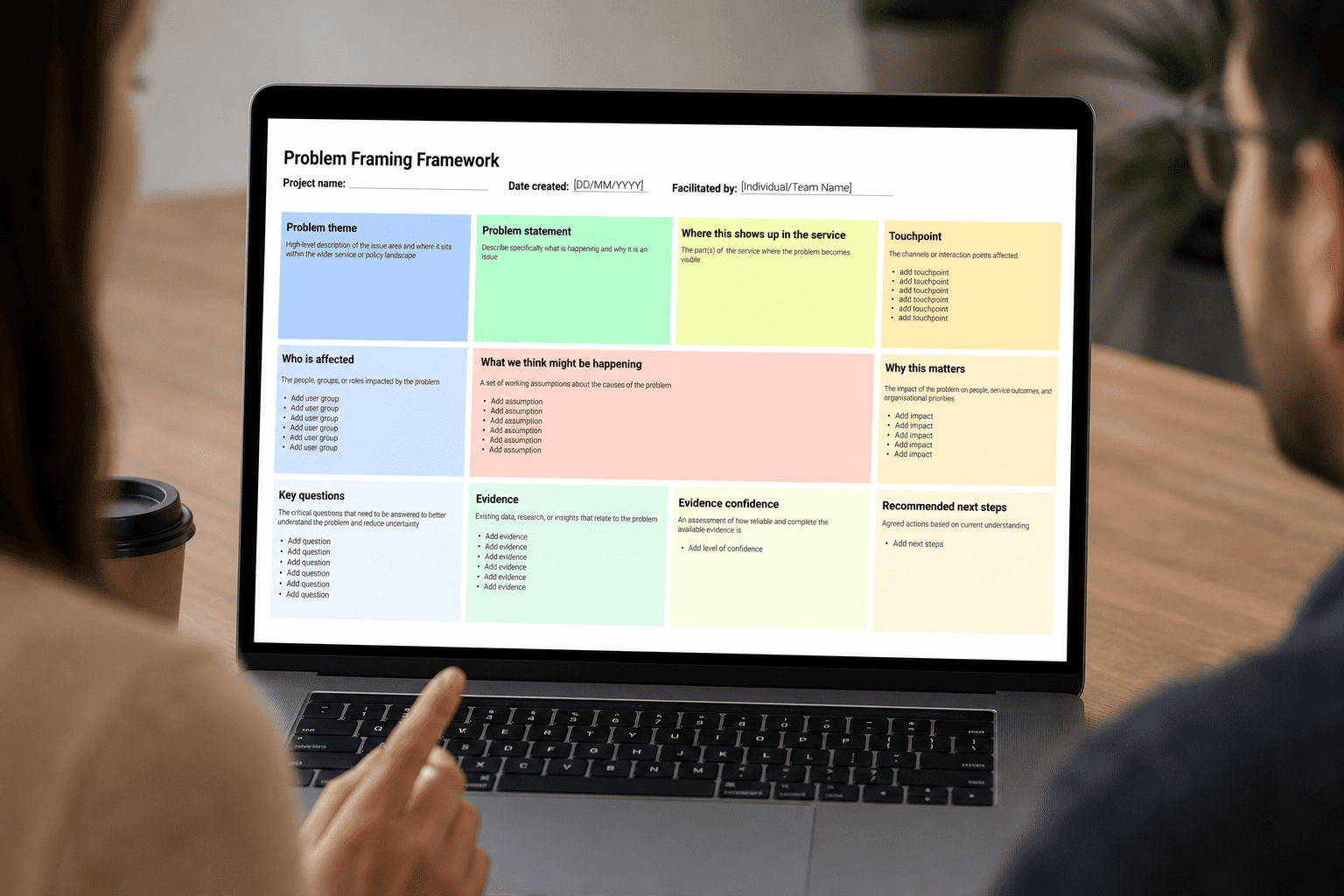

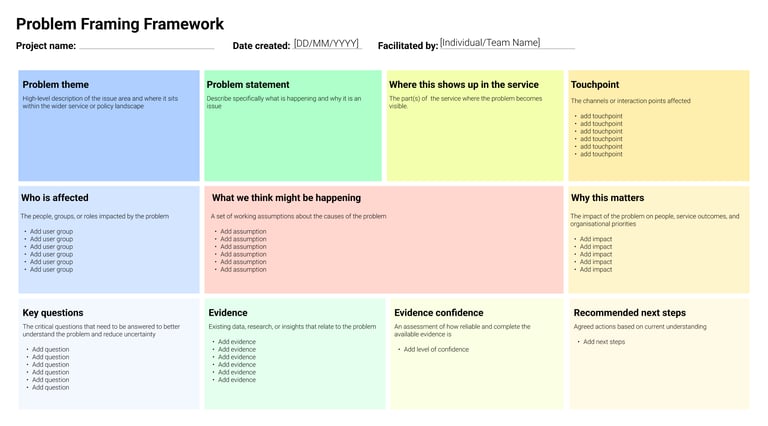

A practical problem framing framework for aligning teams and surfacing assumptions

This framework is designed to help teams define problems clearly while making assumptions visible and usable. It prioritises clarity over completeness, ensuring that what is captured can actually be acted on. The structure is deliberate but flexible, allowing teams to adapt it based on context without losing consistency. It is built for collaboration, bringing together different disciplines to contribute their perspective in a way that fits together which is a crucial step towards ensuring the information added is comprehensive and as close to the truth as possible.

How each part of the problem framing framework helps define problems and expose assumptions

Problem theme: Start by anchoring the issue within the wider service or policy landscape. This helps teams understand where the problem sits and why it matters in a broader context. In government settings, this often links back to policy intent or strategic priorities.

Problem statement: Move to a clearer articulation of what is actually happening. This should describe the situation in specific terms, avoiding assumptions about causes at this stage.

Where this shows up in the service: Identify the points in the service where the problem becomes visible. This makes the issue tangible and helps ground discussions in real interactions and processes.

Touchpoints: Document the channels or interaction points involved, such as online services, phone calls, or in-person interactions. This helps identify where changes may need to happen.

Who is affected: Define the people or groups impacted by the problem. This should be everyone interacting with the problem area, including edge cases and vulnerable users.

What we think is happening: This is the core section where assumptions are captured. The goal here is to articulate beliefs without presenting them as facts, allowing them to be validated later.

Why this matters: Connect the problem to outcomes, including user impact, operational efficiency, and policy goals. This helps prioritise what needs attention.

Key questions: Turn these assumptions into questions that guide inquiry. These questions will shape research, analysis, and testing.

Evidence: Capture existing data, research, and insight that relate to the problem. In organisations where analysts sit on a library of past research reports and evaluations, this is where they play a key role.

Evidence confidence: Assess how reliable and complete the evidence is. This helps avoid overconfidence in partial data.

Recommended next steps: Define what should happen next based on current understanding. This could include testing, further research, or immediate changes.

The role of analysts and evidence in this process

Analysts play a critical role in grounding the framing in reality. They bring visibility of existing evidence, including data trends, past research, and ongoing tests. This helps teams understand what is already known and where the gaps are. By maintaining an overview of evidence and activity, analysts prevent duplication of effort and ensure that new work builds on what already exists. This makes the process more efficient and strengthens the quality of decisions being made.

In teams without dedicated analysts, this responsibility often sits across multiple roles. Service designers, user researchers, and product leads may each hold parts of the evidence, but it can be fragmented. Without a clear view, teams risk missing important insights or repeating work that has already been done. This leads to framing more reliant on assumptions instead of evidence. It also means additional effort is needed to bring information together before decisions can be made.

In agency environments, the challenge is often access rather than capability. Agencies may have strong skills in research and analysis, but limited visibility of internal data or ongoing tests. This creates gaps in understanding that need to be addressed through collaboration with client teams. Framing in this context requires more effort to validate assumptions and build a shared evidence base.

In startups, speed often takes priority over structure. Teams may rely heavily on instinct and rapid experimentation, with less formal documentation of evidence. While this can drive quick progress, it increases the risk of repeating mistakes or missing patterns over time.

To adapt this framework, teams need to adjust how evidence is gathered and shared. This may mean creating lightweight evidence logs, assigning ownership of insight, or building simple ways to track tests.

A step by step example of using a problem framing framework to define problems and surface assumptions

Let’s take a common UK local authority service: reporting missed bin collections.

Problem theme

“Residents are repeatedly reporting missed waste collections, creating additional workload for council teams and dissatisfaction among residents.”

Problem statement

“A high volume of missed bin reports are being submitted, even in areas where collections have been completed, leading to unnecessary follow-ups.”

Where this shows up in the service

Online reporting forms

Customer service calls

Follow-up operational processes.

Touchpoints

Council website

Phone lines

Internal waste management systems

Who is affected

Residents, particularly those in high-density areas

Council staff handling reports and collections

What we think is happening (assumptions)

Residents are not aware of collection schedules

Bins are being missed due to operational issues

The reporting system is too easy to access without validation

Why this matters

Increased operational cost

Reduced trust in the service

Inefficiencies in waste management

Key questions

Are collections actually being missed or incorrectly reported?

Do residents understand collection schedules?

What triggers a report submission?

Evidence

Data shows a mismatch between reported missed collections and actual operational records.

Evidence confidence

Moderate: data exists but lacks context on user behaviour.

Recommended next steps

Run a small scale test around changes to the reporting flow.

Resulting hypothesis

If residents are shown real-time collection updates before submitting a report, the number of unnecessary reports will decrease.

We will know this to be true when:

There is a measurable reduction in duplicate or unnecessary missed collection reports within the test area over a defined period (e.g. 4–6 weeks)

These improvements are noticed alongside an increase in users viewing collection status information before submitting a report, without corresponding drop in legitimate missed collection reports being raised

How to run an effective problem framing session with multidisciplinary teams

Running a strong framing session starts by creating the right conditions for honest, structured thinking.

Who to include

Policy

Operations

Service designers, user researchers, content designers, and interaction designers (where appropriate)

Analysts or data specialists

Delivery teams

Product managers

How to facilitate

Start with the problem theme to anchor the discussion

Move through each section of the framework systematically

Encourage contribution from all disciplines

Capture assumptions explicitly without validating them yet

Keep discussions grounded in real service context

Common pitfalls to avoid

Dominant voices that strongly promote a single perspective overriding others

Jumping to solutions instead of focusing on understanding the problem

Treating assumptions as facts when evidence is lacking

Overcomplicating the session by trying to capture everything

Lack of follow-through which steers the activity towards documentation instead of a means to inform next steps

How problem framing connects to hypothesis driven service design and iteration

Framing outputs feed directly into hypothesis creation by turning assumptions into structured, testable statements. Teams should define what they think is happening and what they expect to see if that assumption is true. These hypotheses will go on to inform design. Because the problem has been clearly defined, teams can design targeted interventions that test specific assumptions.

The results of these experiments or tests lead to service changes. Whether scaling, iterating, or pivoting, decisions will be based on what has been learned rather than what was initially assumed. This is where the wider hypothesis-driven iterative service improvement comes into play, moving from framing into validation, testing, and learning.

Why problem framing helps reduce exclusion and leads to better service outcomes

When assumptions are surfaced early, teams are more likely to identify where services may not work for certain groups. This shifts exclusion from something that is discovered later to something that can be actively designed in consideration of. It also ensures that decisions are grounded in real understanding rather than partial views or dominant perspectives. More importantly, it moves framing beyond a workshop activity into a decision-making tool. When used consistently, it improves how we deliver services that work in reality for the people who rely on them.