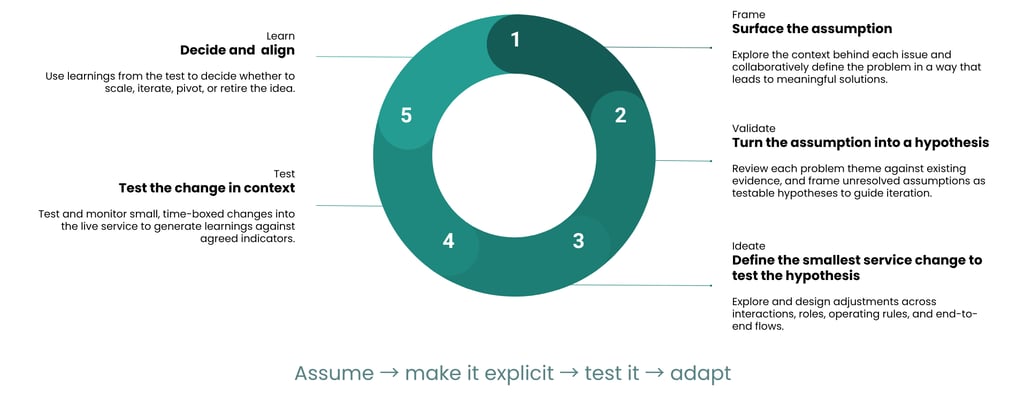

At its core, this approach mirrors a loop rather than a straight line. Each phase builds on the last, but more importantly, each one is designed to reduce uncertainty in a structured way. What makes it work in practice is that every phase has a clear purpose, a set of activities, and tangible outputs that help teams move forward with confidence.

1. Framing: This is where teams attempt to understand the problem properly and make any assumptions visible.

Typical activities:

Cross-disciplinary framing workshops (policy, ops, design, research, analysis)

Mapping where issues show up across the service journey

Identifying affected users and edge cases

Capturing assumptions about what might be happening and why

Outputs:

Clearly defined problem statements

A structured list of assumptions

Key questions that need answering

An initial view of available evidence and gaps

2. Validating: Once assumptions are visible, the next step is to make them usable by turning them into testable hypotheses while ensuring a need for further exploration into the problem based on what existing evidence currently exits.

Typical activities:

Reviewing existing data, research, and operational insight

Working with analysts to assess evidence strength

Reframing assumptions into testable hypotheses (e.g. “We believe X is happening because Y, which leads to Z outcome”)

Prioritising which hypotheses to test

Outputs:

A prioritised set of testable hypotheses

Evidence summaries with confidence levels

A clear rationale for what to test first

3. Ideation: Now the focus shifts from understanding to defining the smallest possible change that can test a hypothesis.

Typical activities:

Collaborative design sessions across disciplines

Exploring changes to touchpoints, roles, rules, or journeys

Mapping operational implications with delivery teams

Aligning on feasibility and constraints

Outputs:

Defined intervention(s) tied directly to the hypothesis

Clear description of what will change in the service

Agreement on scope, duration, and boundaries of the test

4. Test: This is where the learning happens by introducing changes into the live service in a controlled, time-boxed way.

Typical activities:

Deploying small changes into the live environment

Monitoring behavioural and operational data

Gathering qualitative feedback where needed

Tracking defined success indicators

Outputs:

Performance data

Observed user and operational responses

Early signals of impact

5. Learn: This is where teams come back together to make sense of what happened and decide what to do next.

Typical activities:

Reviewing test results against hypotheses

Interpreting data with analysts and researchers

Facilitating decision-making sessions with stakeholders

Aligning on next steps based on evidence

Outputs:

A clear decision on whether to scale, iterate, pivot, or retire

Documented learnings and rationale

Inputs into the next iteration of the loop

This phase closes the loop, but it also sets up the next one. Learning isn’t the end, it’s the input for the next round of framing and refinement. Each phase gives teams just enough structure to move forward with confidence, while staying flexible enough to adapt as new evidence emerges.

How assumptions move through the lifecycle

What makes this approach powerful is how it treats assumptions as something to move through a lifecycle, not something to resolve upfront. An assumption starts as a loose belief often based on partial data, experience, or policy intent. Instead of acting on it immediately, the loop forces it to become explicit, shaped into a hypothesis, tested in context, and evaluated against real outcomes. What this does is turn ambiguity into structured learning.

Example: Missed appointments in an outpatient NHS service

Initial policy assumption:

“Users are missing appointments because they’re not being reminded.”

This may appear as a reasonable assumption. Missed appointments cost the NHS time and money, and reminders feel like an obvious fix. But without testing it, there’s a risk of investing in the wrong solution. Using this approach, rather than begin to design a solution aimed at the assumption, teams would:

Phase 1: Surface the assumptions by bringing together service designers, NHS operational staff, policy leads, and analysts.

What emerges:

The issue shows up as high no-show rates in specific clinics

It affects patients with varying needs, including those with complex conditions

Touchpoints include appointment letters, SMS reminders, and booking systems

Key assumptions identified:

Patients forget appointments

Existing reminders aren’t reaching users

More frequent reminders would reduce no-shows

Outputs:

A clearly defined problem: missed appointments in specific contexts

A list of assumptions driving current thinking

Key questions: Are reminders the issue, or is something else at play (e.g. timing, accessibility, transport)?

Phase 2: Turn assumptions into hypotheses by reviewing existing data and what it indicates:

SMS delivery rates are high

Some patients confirm receipt but still don’t attend

Patterns show higher drop-off in certain demographics and appointment types

Hypothesis formed:

“If patients receive a reminder closer to the appointment time, attendance rates will improve.”

Other competing hypotheses might also emerge:

“Patients are unable to attend due to scheduling conflicts, not forgetfulness”

“Appointment details are unclear or difficult to act on”

Outputs:

A prioritised hypothesis to test

Evidence gaps identified (e.g. reasons behind non-attendance)

Step 3: Define the smallest service change that's testable, requiring minimal time and resource.

Proposed intervention:

Introduce a same-day SMS reminder for a subset of appointments

Include clearer instructions and an easy way to reschedule

Considerations:

Operational feasibility (can clinics handle rescheduling?)

Technical constraints (SMS system capability)

Policy alignment (data use, consent)

Outputs:

A scoped test affecting a small cohort

Clear definition of what’s changing and where

Step 4: Introduce the change in a controlled way:

Applied to selected clinics or appointment types

Monitored over a defined time period

What’s tracked:

Attendance rates

Rescheduling behaviour

Operational impact (e.g. admin workload)

Outputs:

Real-world data on whether the reminder timing affects attendance

Early signals on unintended consequences

Step 5: Decide and align together as a team by reviewing the results together.

Possible outcomes:

Attendance improves → scale the approach

No significant change → revisit assumptions

Increased rescheduling but not attendance → refine hypothesis

Key learning:

The issue may not be about reminders alone but may also link to flexibility, clarity, or external factors affecting attendance.

Decision:

Iterate on the approach or test a different hypothesis (e.g. easier rebooking options)

This example shows how a seemingly straightforward assumption can evolve once it’s properly examined. Instead of jumping straight to “send more reminders,” the team uses the loop to understand what’s really happening and respond accordingly, resulting in a more informed decision, grounded in how the service actually works in reality.

Why everything hinges on the framing phase

The framing phase sets the direction for everything that follows. If the problem isn’t properly understood, or if assumptions remain hidden, the rest of the loop simply reinforces the wrong thing more efficiently. There are many reasons why it's important to get this phase right.

It defines what problem you’re actually solving

It surfaces assumptions before they shape solutions

It connects policy intent to service reality

It sets the direction for all downstream activity

Rushing framing creates false alignment and “Obvious” problems go unchallenged

Root causes get overlooked

Exclusion risks increase

To do this well, framing needs structure. Without it, conversations drift, assumptions stay implicit, and alignment becomes surface-level rather than real. A structured approach gives teams a way to slow down just enough to ask the right questions, surface what’s uncertain, and connect different perspectives into a shared understanding. This is where a more deliberate problem framing framework that has assumptions built in becomes essential, not just to define the problem, but to make assumptions visible and usable so they can be tested and learned from as part of the wider design process.

Enabling safe testing in the live service

Testing in live services means there’s no clean separation between design and reality as changes made will affect real people, in real situations, often with little room for error. This approach creates conditions to move forward without exposing users or operations to unnecessary risk.

Time-boxed, reversible changes: Every intervention is designed to be temporary and easy to roll back. If something doesn’t work, it can be stopped quickly without long-term consequences.

Clear success and safety indicators: Before testing begins, teams define what good looks like and what warning signs to watch for. This ensures issues are spotted early and acted on quickly.

Operational alignment before testing: Frontline teams are involved early, ensuring they’re prepared for any changes and can flag risks that may not be visible at a design level.

Continuous monitoring during tests: Data and feedback are reviewed in real time so adjustments can be made if needed.

Built-in governance checkpoints: Regular review points ensure that what’s being tested is understood, agreed, and proportionate, supporting accountability without slowing progress.

Protecting vulnerable users: Tests can be designed to exclude or carefully include sensitive user groups, ensuring no one is disproportionately impacted during early iterations.

Learning before scaling: Nothing is rolled out widely until there’s enough evidence to support it. This ensures that scaling is based on proven outcomes, not intent or assumption.

This shifts risk from being something discovered too late to something actively designed around from the start.

What changes when teams adopt this approach

Instead of pushing for agreement or relying on past experience, teams start to align around learning, evidence, and shared understanding. Adopting this way of working changes how teams think, collaborate, and make decisions.

Better conversations with policy: Policy colleagues are brought into the process of exploring what might work in practice, creating a more constructive relationship between intent and delivery.

More confidence in decision-making: Teams can point to evidence from real-world tests, making it easier to justify changes and move forward with clarity.

Reduced risk of exclusion: By surfacing assumptions early and testing them, teams are more likely to identify where services may not work for certain groups which allows adjustments to be made before issues become embedded at scale.

Shift from opinion to evidence-led iteration: Ideas are treated as hypotheses, and progress is measured by insight gained rather than agreements reached through discussions and other informal ways.

Stronger cross-disciplinary alignment: Policy, operations, design, and analysis work from a shared structure, reducing misinterpretation and ensuring everyone is contributing to the same learning goals.

Greater transparency and accountability: Decisions are easier to trace back to specific hypotheses, tests, and outcomes, creating a clearer narrative for stakeholders and governance forums.

How to start

This starts with a shift in how you see assumptions. Not as something to eliminate, but as something to work with deliberately. When treated as assets, assumptions become the starting point for learning, not a hidden risk. That shift has a direct impact on inclusion and real-world outcomes. It’s what helps teams catch where services may not work for certain groups before these issues scale, and it grounds decisions in how services actually operate, not just how they’re intended to.

Designing in complex systems will always involve assumptions. The difference is whether they stay hidden or become part of how you learn and improve. When teams start working this way, the impact isn’t just better services, but better decisions about who those services work for and why. A step-by-step breakdown of the problem framing framework used here, including templates and facilitation guidance, is covered in - A problem framing framework for challenging assumptions and driving better decisions.